Stop Finding and Start Fixing with AI Security Suggestions

Stop Finding and Start Fixing with AI Security Suggestions

AI-powered security fixes are automated tools that detect security vulnerabilities in your code and generate verified patches — often as pull requests — without requiring manual developer intervention.

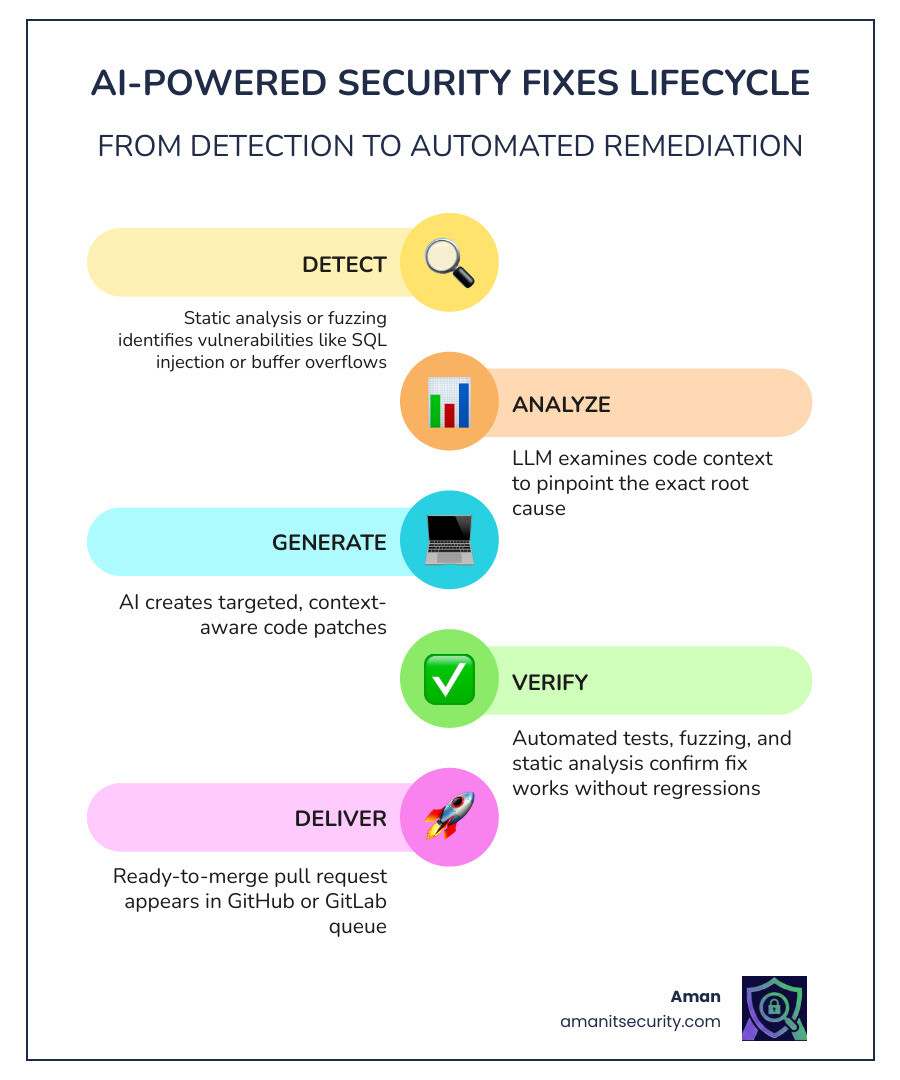

Here’s how they work at a glance:

- Detect — Static analysis or fuzzing tools find a vulnerability (e.g., SQL injection, buffer overflow)

- Analyze — An LLM reads the code context and identifies the root cause

- Generate — The AI proposes a targeted code fix

- Verify — Automated tests confirm the patch works and doesn’t break anything

- Deliver — A ready-to-merge pull request lands in your GitHub or GitLab queue

If you’re a DevSecOps engineer, you already know the pain. Vulnerability backlogs grow faster than teams can clear them. Security alerts pile up. Developers are pulled away from shipping features to chase down findings from SAST tools. And Mean Time to Remediate (MTTR) — one of the most watched KPIs in security — keeps climbing.

The problem isn’t finding vulnerabilities. Modern tools are remarkably good at that. The bottleneck is fixing them.

AI-powered security fixes flip the script. Instead of handing developers a list of problems, these tools hand them solutions — validated, context-aware patches ready to review and commit. Real-world results back this up: some teams have cut vulnerability remediation time by at least 80%, and Google’s internal use of LLM-based patching resulted in hundreds of bugs fixed at scale.

I’m Zezo Hafez, an AWS and Azure certified IT Manager with over 15 years of web development experience, and I’ve seen how integrating AI-powered security fixes into DevSecOps pipelines transforms security from a bottleneck into a built-in safeguard. In the sections ahead, I’ll break down exactly how these tools work, which ones lead the field, and how you can implement them without disrupting your team.

What are AI-Powered Security Fixes?

We’ve all been there: a security scan finishes, and suddenly you’re staring at a spreadsheet of 400 “critical” vulnerabilities. Your heart sinks because you know that “finding” the bug was the easy part. The hard part is the hours of manual debugging, context-switching, and testing required to fix it.

AI-powered security fixes represent the evolution of AppSec. By leveraging Large Language Models (LLMs) like Gemini or GPT-4, these systems don’t just point at a line of code and scream “Danger!” Instead, they act as a virtual security engineer. They ingest the vulnerable code, understand the surrounding logic, and draft a surgical correction.

Think of it as a side-by-side code diff where the left side is your “oops” and the right side is a professionally written, secure alternative. This isn’t just a simple find-and-replace; it’s a context-aware transformation that understands whether you need a strncpy instead of a strcpy or if you need to implement parameterized queries to stop a SQL injection in its tracks.

To understand the impact, let’s look at the Role of Automated Security Tools in this new landscape. Traditional tools were “detectors.” AI tools are “remediators.”

Manual Patching vs. AI-Powered Security Fixes

| Feature | Manual Patching | AI-Powered Security Fixes |

|---|---|---|

| Speed | Hours to days per bug | Minutes |

| Developer Effort | High (Context switching) | Low (Review & Merge) |

| Consistency | Varies by developer skill | High (Standardized best practices) |

| Scalability | Linear (Needs more people) | Exponential (Handles thousands of bugs) |

| Verification | Manual unit testing | Automated fuzzing & diff testing |

The Shift from Detection to Autonomous Action

We are moving away from “alert noise” toward “actionable intelligence.” In the past, security teams were the “Department of No,” slowing down releases to fix bugs. Today, we use “agentic AI”—AI that can take independent action—to proactively suggest guardrails.

This shift is crucial because software’s DNA has changed. With the rise of AI-generated code, the volume of software being produced is exploding. Humans simply cannot keep up with the detection-to-fix cycle anymore. Research shows that AI-powered patching is the only way to scale defenses at the same speed that attackers are using AI to find holes.

By moving to a remediation-first mindset, we empower developers. When a tool like ours at Aman Security provides an instant AI explanation and a fix suggestion, it removes the “I don’t know how to fix this” hurdle that often stalls development.

Integrating AI into the DevSecOps Pipeline

The magic of ai powered security fixes happens when they live where developers live. You shouldn’t have to log into a separate “Security Portal” to see suggestions.

- IDE Plugins: Tools like Snyk and GitHub Copilot provide “Zap” icons or Code Lens suggestions directly in VS Code or JetBrains. You see a squiggly line, click “Fix,” and the code updates.

- Pull Requests: This is the gold standard. When a developer pushes code, the CI/CD pipeline runs a scan. If a vulnerability is found, the AI automatically comments on the PR with a fix or opens a separate “fix-up” branch.

- GitHub & GitLab Integration: Most modern solutions integrate via GitHub Actions or GitLab CI. For example, GitHub’s “autofix” feature for code scanning uses CodeQL results to suggest changes directly in the PR experience.

How AI Automates Vulnerability Remediation Under the Hood

How does a machine actually “understand” a security flaw? It’s not magic; it’s a sophisticated pipeline of data extraction and reasoning.

First, the system needs context. It doesn’t just look at one line; it looks at the “data flow.” If a variable is tainted at the API endpoint and used in a database query three files away, the AI needs to see that entire path. This is often powered by SARIF (Static Analysis Results Interchange Format) files and CodeQL, which treat code like a searchable database.

Once the context is extracted, it’s fed into an LLM with a specific “prompt.” This isn’t a simple “Fix this code” prompt. It’s a multi-stage instruction that includes:

- The vulnerability type (e.g., CWE-89: SQL Injection).

- The specific sink (where the crash or leak happens).

- The relevant code snippets.

- Style guidelines to ensure the fix looks like it was written by the original author.

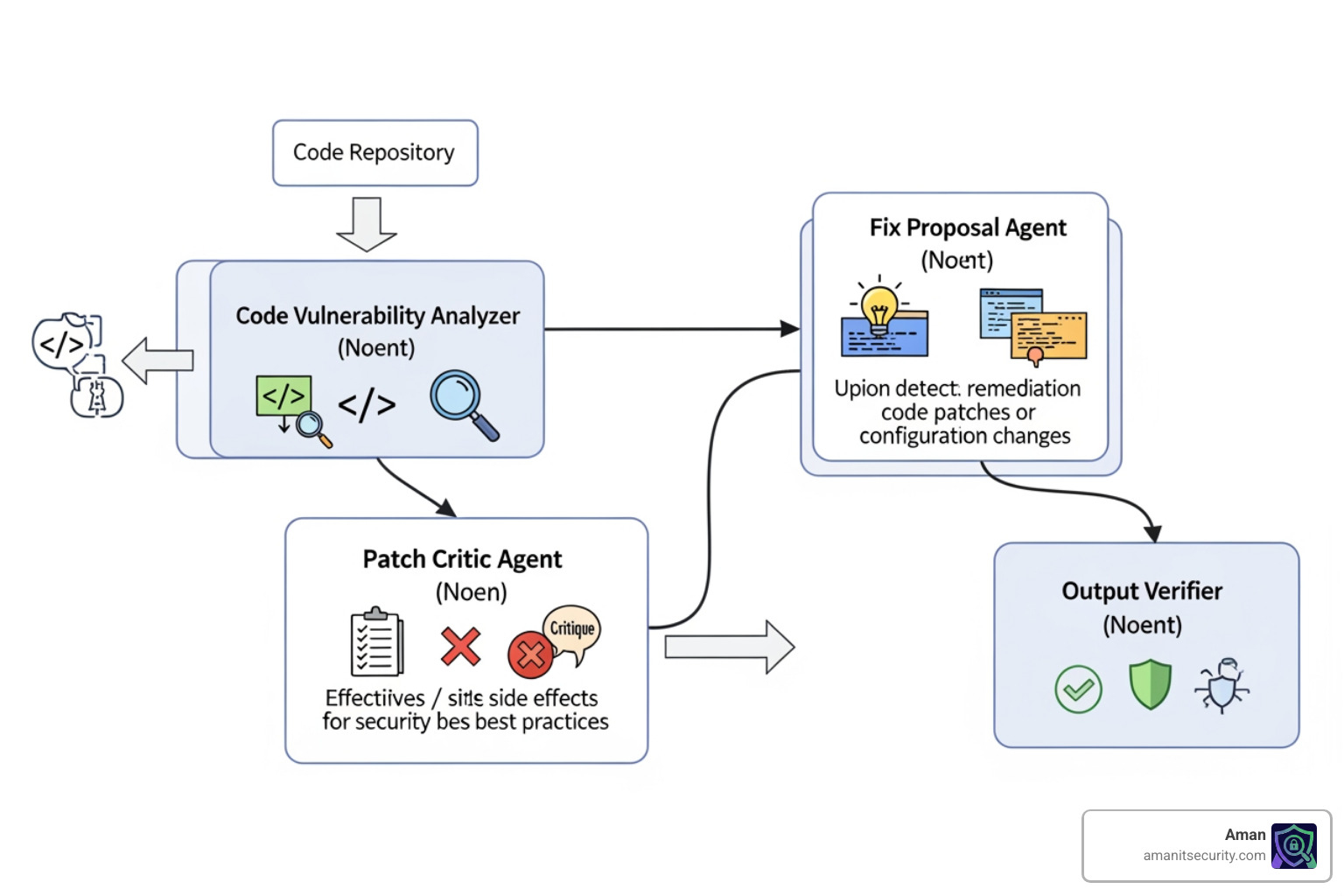

According to Google’s study on generic program repair agents, using a multi-agent system—where one AI proposes a fix and another AI “critiques” it for regressions—significantly improves the quality of the final patch.

Verification Techniques for AI-Powered Security Fixes

You wouldn’t trust a random stranger to rewrite your production code, so why trust an AI? Verification is the “trust but verify” pillar of automated security.

We use several layers of validation:

- Syntactic Checks: Does the code even compile? (You’d be surprised how often early LLMs failed this).

- Unit Tests: Does the new code pass all existing tests?

- Differential Testing: This is a “white-box” technique where the system compares the program state of the original code vs. the patched code using a debugger (like LLDB). If the original code crashed but the new code handles the input gracefully without changing the output, the fix is likely correct.

- Fuzzing: We run the patched code against thousands of random inputs to ensure we haven’t just moved the vulnerability to a different line.

For those interested in the technical benchmarks, AutoPatchBench on GitHub is a fantastic resource. It’s a standardized benchmark specifically for AI repair of vulnerabilities found through fuzzing, providing a playground to test how well AI agents can fix real-world C/C++ bugs.

Ensuring Semantic Preservation and Avoiding Regressions

The biggest fear with automated fixes is a “regression”—fixing a security hole but breaking the actual feature. To prevent this, AI agents perform “root cause analysis.”

For example, if a buffer overflow is caused by an incorrect stack management issue in an XML parser, a “lazy” AI might just add a check to stop the crash. A “smart” AI, guided by semantic analysis, will fix the underlying logic of how the stack is managed.

When you are choosing an AI SAST analysis tool, look for tools that emphasize “semantic preservation.” This ensures the AI understands the intent of your code, not just the syntax.

Leading Tools and Industry Success Rates

The market for ai powered security fixes is heating up, with several heavy hitters leading the charge:

- GitHub Copilot Autofix: Now generally available, it integrates directly with CodeQL. It has been shown to help developers fix vulnerabilities more than three times faster than manual efforts.

- Snyk Agent Fix: (Formerly DeepCode AI Fix) This tool uses a hybrid approach, combining a deep-learning model trained on millions of open-source lines with a symbolic engine that verifies the fixes.

- Veracode Fix: Focuses on “human-in-the-loop” scaling. It suggests fixes but keeps the developer in the driver’s seat to ensure the final patch meets enterprise standards.

- Mend.io: Known for “Mend Renovate,” which automates dependency updates. They claim their AI-based workflows can reduce vulnerability remediation time by 80%.

If you’re feeling overwhelmed by the choices, check out our guide on 3 AI Security Audit Tools That Will Not Make You Nap for a breakdown of tools that actually deliver results.

Real-World Benchmarks for AI-Powered Security Fixes

Does it actually work in the wild? The numbers say yes.

Google recently reported that their Gemini model successfully fixed 15% of sanitizer bugs discovered during unit tests. While 15% might sound low, in a company the size of Google, that translates to hundreds of bugs patched automatically, saving thousands of hours of engineering time.

Furthermore, in the SWE-Bench Verified benchmark, which tests AI agents on real-world GitHub issues, we’ve seen models like Gemini 1.5 Pro achieve a 61.1% patch generation success rate, though only about 5-11% of those pass the most rigorous full verification checks. This shows that while the AI is getting very good at suggesting fixes, the “Verification” step we discussed earlier is still the most critical part of the process.

Language Support and Vulnerability Coverage

Currently, support is strongest for the “Big Three”: JavaScript/TypeScript, Python, and Java. These languages have massive datasets for AI training and mature static analysis engines.

However, we are seeing rapid expansion into:

- C/C++: Particularly for memory safety issues like buffer overflows and use-after-free bugs.

- Go: For concurrency issues and sanitizer bugs.

- SQL: Identifying and fixing injection points by suggesting parameterized queries or ORM best practices.

- Infrastructure as Code (IaC): Fixing misconfigured S3 buckets or open security groups in Terraform and CloudFormation.

Overcoming Challenges: Privacy, IP, and Trust

“Will the AI steal my code?” This is the #1 question we get.

Most enterprise-grade AI security tools are built with a “Privacy First” architecture. Leading providers ensure that customer code is never used to train their global models. Instead, these models are trained on permissively licensed open-source code.

When implementing these tools, you must ensure:

- Data Isolation: Your proprietary logic shouldn’t leak into the training sets of other companies.

- License Compliance: The AI shouldn’t suggest a fix that is a verbatim copy of GPL-licensed code if you are building a proprietary product.

- IP Protection: Use tools that offer “short-term caching” only, where code is deleted immediately after the fix is generated.

Addressing the “Cheating” Problem in AI Patches

There is a phenomenon in AI training called “cheating” or “superficial fixing.” An AI might “fix” a crash by simply deleting the code that crashes. Technically, the bug is gone, but so is your feature!

This is why “Human-in-the-Loop” is so important. We don’t recommend “auto-merging” security fixes without a quick human review. A developer should always look at the diff to ensure the AI hasn’t hallucinated a new library or bypassed a critical business logic check.

Best Practices for Implementing AI-Powered Security Fixes

Ready to get started? Here is our roadmap for a smooth rollout:

- Start with “Low-Hanging Fruit”: Enable AI fixes for simple issues like dependency updates (using tools like Renovate) and well-defined linting/security errors in JavaScript.

- Incremental Rollout: Don’t turn on “Auto-PR” for every repository at once. Start with a few pilot teams and gather feedback.

- Policy Guardrails: Set rules for what the AI can and cannot touch. For example, you might allow AI to fix “High” and “Medium” vulnerabilities but require a manual security architect review for “Critical” core logic.

- Developer Education: Teach your team that the AI is an assistant, not a replacement. They are still responsible for the code they merge.

Frequently Asked Questions about AI Security Fixes

Can AI-generated patches introduce new security vulnerabilities?

Yes, it is possible. AI can sometimes “fix” one bug while inadvertently creating another (like a logic flaw). This is why automated verification (compilation checks, unit tests, and security rescanning) is non-negotiable. Always rescan the code after the fix is applied.

Is my proprietary source code used to train these AI models?

For reputable enterprise tools, the answer is usually no. Most providers use permissively licensed open-source data for training. However, always check the “Data Privacy” section of your vendor’s agreement to ensure they don’t use your “prompts” or “code snippets” to improve their global models.

Which vulnerability types are most effectively fixed by AI today?

AI excels at “pattern-based” fixes. This includes SQL injection, Cross-Site Scripting (XSS), insecure dependency versions, and common memory management errors in C++. It struggles more with “architectural” flaws, such as broken authentication logic or complex multi-file business logic vulnerabilities.

Conclusion

The era of manual vulnerability triage is coming to an end. By embracing ai powered security fixes, organizations can finally close the gap between detection and remediation, allowing developers to focus on what they do best: building amazing products.

At Aman Security, we believe that security should be fast, comprehensive, and accessible. That’s why we offer free scans with instant AI explanations and fix suggestions. Whether you’re looking for automated penetration testing, SAST analysis, or infrastructure scanning, our mission is to help you stop finding problems and start shipping solutions.

Ready to see how AI can clean up your backlog? Visit Aman Security today and take your first step toward an autonomous, secure future.

Secure Your Apps with Aman

Put these mitigation steps into practice. Get professional-grade vulnerability detection in one place.

Launch Your First Scan Now